Utilizing AI in Talent Acquisition? Five Questions to Ask Before Trusting These Technologies

.png)

AI is no longer an emerging trend in the talent acquisition space. Their sourcing, screening, assessing and shortlisting of candidates at scale is already built in.

As AI assumes a greater role in hiring, the conversation has begun to change. To be competitive, companies need more than speed and efficiency.

Talent leaders, legal teams and procurement functions are today asking a more fundamental question.

Can this technology built on AI be trusted?

Trust here means neither the intent nor branding. It is pertaining to Equity, Transparency, Auditability, and Regulation. As scrutiny intensifies of AI use, organisations must make sure they deploy technology that can stand up to regulatory review, legal challenge and reputational risk.

At Recruitment Smart Technologies, we firmly believe that you have to earn trust in AI based on evidence and not claim. This article mentions 5 important questions every organization must ask before using AI in hiring process.

The need for trust in AI hiring technology is compelling

Hiring decisions are determiners of careers, jobs, and the culture of the organisation. Artificial intelligence systems that affect such decisions have consequences.

Authorities and regulators have acknowledged it. Many jurisdictions across the globe now either effectively regulate or currently monitor the use of AI in recruiting.

- The EU AI Act labels a range of AI systems linked to hiring as high risk.

- The City of NYC Local law 144 requires bias audits for automated hiring tools.

- Prohibits algorithmic discrimination, Colorado SB 205.

- California FEHA is a law governing discrimination at work and fair conduct.

Simultaneously, also aware of the way technology is used in hiring, candidates increasingly expect to see fair processes.

The convergence of these regulations, candidates’ expectations, and enterprise risk means that AI trust is no longer optional. Recruitment plays a significant role in the growth of a business.

Grasping Protected Characteristics in AI Recruitment

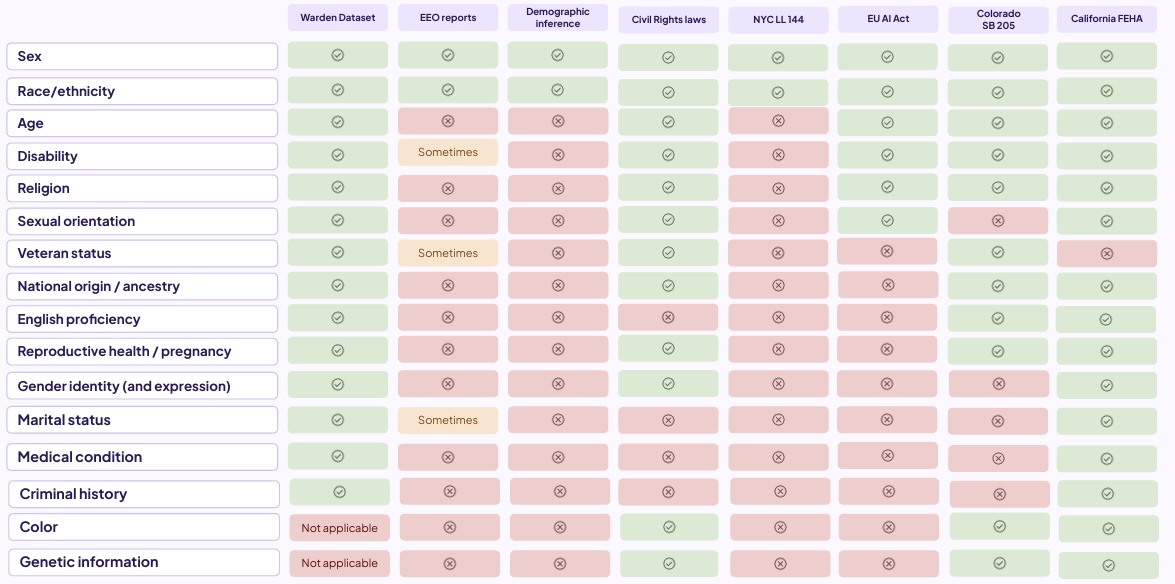

How systems behave with respect to various protected characteristics is central to Responsible AI in recruitment. These are characteristics which employment and civil rights laws protect against discrimination.

The classes are different in every jurisdiction but are usually sex, race, age, disability, religion, national origin, and others. Fairness and compliance of AI systems are the main criteria for advocating bias testing and governance.

Independent audits will examine the protected characteristics of each of the relevant data sources and regulatory frameworks to give transparency on fairness evaluation.

The image below presents a summary of the coverage of protected characteristics within independent audits of the AI.

Protected Characteristics Coverage in AI Audits

This coverage forms the backbone of how AI systems are evaluated for fairness, legal alignment, and risk exposure.

Five Questions to Ask Before Trusting AI in Talent Acquisition

1. How does the AI align with hiring and artificial intelligence regulations?

AI systems used in recruitment should operate within a rapidly changing regulatory landscape. Inquire with the providers about the compatibility of their technology with the existing and upcoming legislation.

Reliable vendors must explain how their systems are governed by frameworks such as EU AI Act and US employment standards, and how such arrangements continue to align as regulations change.

2. Which protected characteristics are considered, and why?

Different AI systems view fairness through differing lenses. Organisations should know which protected characteristics are factored in during audits and how those assessments assist in compliance and equitable outcomes.

It is not about sensitive data in hiring decisions. Protected groups should not be disadvantaged or shown to change, exactly like the accused must be able to show fairness.

3. Is bias assessment a continuous or one-time process?

AI systems evolve as time passes. Data alterations change. Usage patterns change. Model is changed.

Relying on one bias assessment at launching is inadequate. Inquire if bias tests and fairness audits are being done over time, validated independently and incorporated into the AI lifecycle.

Regular evaluations lessen the risk of mishaps over the long term, while also instilling confidence that it is sustained fairly.

4. Can the AI clarify its suggested action?

It is important to have explainability in hiring in regulated environments.

Recruiters and compliance auditors should be able to follow the logic of your AI model. When decisions cannot be expressed in human terms or in ways that cannot be explained, it becomes hard to defend these decisions.

AI that we can trust should be transparent but shouldn’t leak sensitive data or proprietary logic.

5. What governance tools help in monitoring and accountability?

Lastly, inquire about available tools to assist continuous monitoring.

This encompasses the dashboards, reporting mechanisms, and audit artifacts that enable organizations to track the AI’s performance, fairness, and compliance over time.

Governance tools make it easier for organisations to manage risks and controls proactively.

Artificial Intelligence That Works Is Infrastructure Not a Feature

Responsible AI is frequently discussed as something to have. Essentially, this is an operational discipline.

In order for talent acquisition AI to scale securely, it must be bolstered by:

- Well-defined governance frameworks.

- Self-Sufficient Audit.

- Protected characteristics protection.

- Openness and clarity.

- Constant verification.

All these elements turn an AI into Enterprise-ready infrastructure.

The Important Takeaway

Artificial intelligence has the potential to create substantial impact in the recruitment industry. Yet that value is sustainable only when there is trust built into the system.

Before incorporating or enlarging the use of AI in recruitment, organisations should demand clear responses to these five questions. Not in theory but in practice. Just relying on claims aren't enough, enterprises should demand evidence.

When AI can be trusted, it does more than speed up hiring. It renders it fairer, safer, and more defensible.

Gaining stakeholder trust in AI-driven hiring is far beyond ticking regulatory boxes. It's more about ensuring that accountability is deeply ingrained in every decision made, in every algorithm developed, and in every employee recruited. Those companies that will have the biggest impact on the future talent market are not necessarily those who are using the most advanced AI, they are the ones who dare to ask serious questions today.

Live Transparency: The Warden AI Assurance Dashboard

One of the most powerful tools for building this trust is live, transparent reporting. The Warden AI Assurance Dashboard provides real-time visibility into how AI systems perform across critical fairness metrics.

This publicly accessible dashboard shows:

- Bias testing results across protected characteristics like gender, race, age, and disability status

- Compliance status with regulations including NYC Local Law 144, EU AI Act, and US employment standards

- Selection rate ratios that demonstrate equitable treatment across demographic groups

- Audit timestamps proving continuous monitoring, not one-time assessments

Unlike traditional compliance approaches where audits happen behind closed doors, this dashboard makes AI accountability visible to all stakeholders, candidates, employers, regulators, and the public. It's proof that responsible AI isn't just a promise, it's a verifiable practice.

Organizations can point to this live evidence when asked about their AI governance, transforming abstract fairness claims into concrete, measurable outcomes.

Gaining stakeholder trust in AI, driven hiring is far beyond ticking regulatory boxes. It's more about ensuring that accountability is deeply ingrained in every decision made, in every algorithm developed, and in every employee recruited. Those companies that will have the biggest impact on the future talent market are not necessarily those who are using the most advanced AI, they are the ones who dare to ask serious questions today.

Ready to see how responsible AI transforms recruitment? Learn about our platform that merges autonomous intelligence and transparent governance allowing more effective hiring decisions while maintaining fairness, compliance, and the candidate experience.

.png)